Homeworks

There will be 2 problem set per week. Each problem set has the programming language that you must use for the assignment, the due date, and a purpose.

Homeworks that are due on Thursday are "finger" exercises, intended to give you additional practice of the current material (to "keep your fingers moving") and will be automatically graded. They will be done solo, and will cover what was learned that week (possibly up including Thursday lecture). Homeworks that are due on Tuesday will be more involved, will be done with a partner, and will be graded by a combination of automatic grading and manual, line-by-line written feedback.

Autograding Feedback

After you upload a solution to Gradescope, you will receive immediate feedback from an autograder. The autograder will evaluate both your implementation and your test suite.

To evaluate your implementation, we run it against your own tests and against our tests. Your implementation should pass all of these tests. If it fails one of our tests, then you should debug your implementation. If it passes all of our tests but fails one of yours, then you should still reexamine your implementation, but you should also reexamine your test suite, since the test itself might be buggy.

To evaluate your test suite, we run it against your own implementation and against our implementations. Based on "Executable Examples for Programming Problem Comprehension" by Wrenn and Krishnamurthi. Our implementations include a correct one, called the "gold," and a handful of (purposefully!) buggy implementations, called the "coals." The goal of your test suite is to distinguish the gold from the coals, so the gold should pass your test suite, whereas every coal should fail your test suite (i.e., it should fail on at least one of your tests). If the gold fails on your test suite, then you should debug your test suite. If a coal passes your test suite, then you should write more tests.

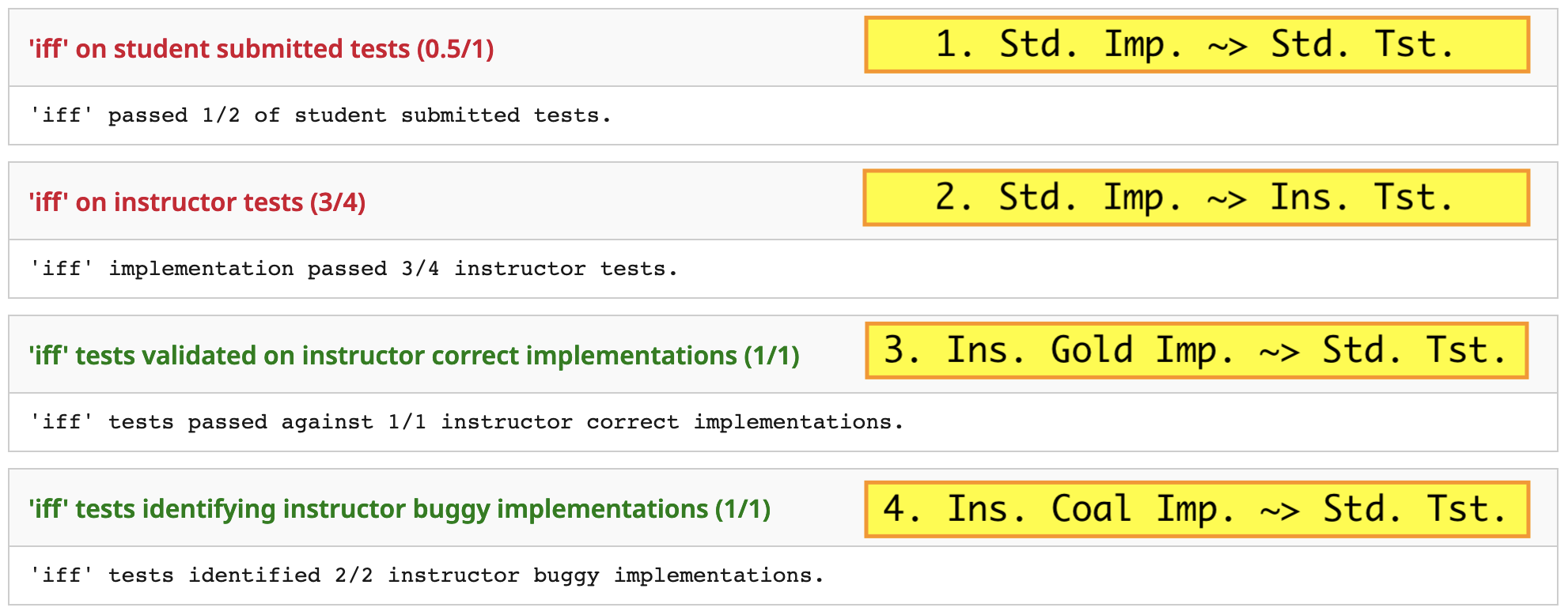

For example, consider the following output (with annotations added):

Student Implementation into Student Tests. The student implementation fails one of the student tests, so either the student implementation is buggy, or one of the student tests is buggy.

Student Implementation into Instructor Tests. The student implementaion fails one of the instructor tests, so the student implementation is definitely buggy. Given the previous information, we still can’t be sure whether the test suite is buggy.

Instructor Gold Implementation into Student Tests. The gold passes the student test suite, so the test suite is not buggy. Given the previous information, we can conclude decisively that the failure in 1. is caused by the implementation, not the test.

Instructor Coal Implementations into Student Tests. Every coal fails the student test suite, so the test suite covers a reasonable baseline set of behaviors. However, this does not mean that the suite is complete.

It’s important to note that passing all of the autograder tests does not mean that your implementation and test suite are completely correct. All it does is provide you with preliminary feedback so you can be sure you’re on the right track. For example, your implementation may pass our test suite but still have a bug. Alternatively, your test suite might catch all of our coals but miss some serious bugs.